As the researchers analyzed how students completed their work on computers, they noticed that students who have access to AI or a person are less likely to refer to reading materials. These two groups revolved their essays mostly by interacting with Chatgpt or chat with the person. Those who only spent the most time with the control list by looking at their essays.

The AI group spent less time rating their essays and made sure they understood what the job was asking. The AI group was also inclined to copy and place a text that the bot had generated, although the researchers prompted the bot not to write directly for students. (Obviously, it was easy for students to bypass this range, even in the controlled laboratory.) The researchers outlined all the cognitive processes involved in writing, and saw that AI students were most focused on the Chatgpt interaction.

“This emphasizes a decisive question in the interaction between man,” the researchers wrote. “A potential metacognitive laziness.” In doing so, they mean dependence on the help of AI, unloading the mental processes of the bot, and do not directly engage in the tasks that are required for synthesis, analysis and explanation.

“Students can become too relying on ChatgPT, using it for easy performance of specific training tasks without fully engaging in training,” the authors write.

Thehe A second study of anthropesC, was released in April during the ASU+GSV investor conference in San Diego. In this study, internal researchers at Anthropic have studied how university students are actually interacting with his AI Bot, called Claude, a Chatgpt competitor. This methodology is a great improvement compared to studies of students who may not remember exactly how AI used.

Researchers began to collect all conversations for an 18-day period with people who created CLude accounts using their university addresses. (The study’s description states that the conversations were anonymous to protect the confidentiality of students.) Then the researchers filter these conversations for signs that a person is likely to be a student, seeking help for scientists, school work, studying, studying a new concept or academic research. The researchers ended with 574 740 conversations for analysis.

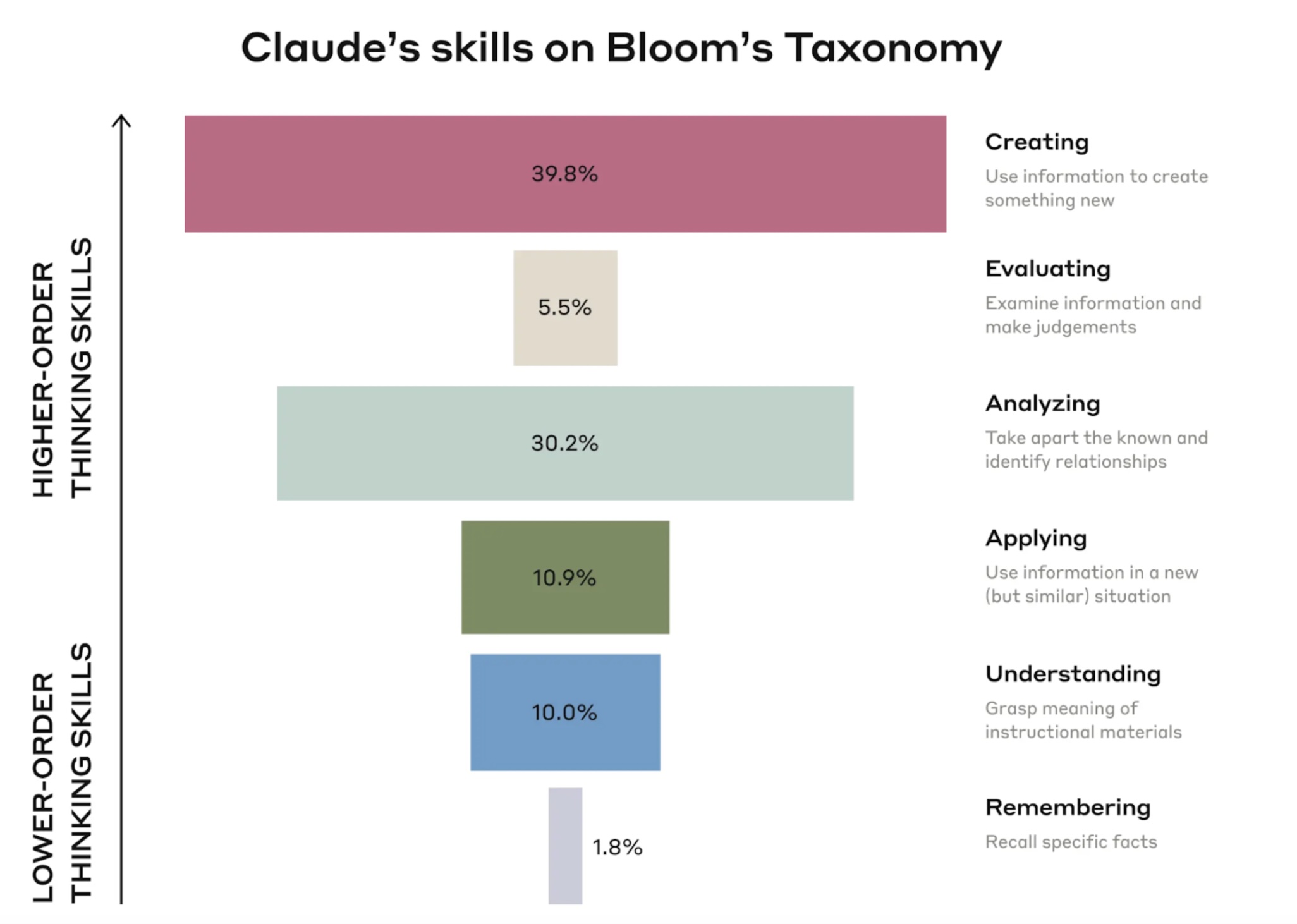

The results? Students mostly used Claude to create things (40 percent of conversations), such as creating a draft encoding and analysis (30 percent of conversations), such as an analysis of legal concepts.

Creating and analyzing are the most popular university students want to make Claude to make for them

Anthropic researchers note that these are cognitive functions of a higher order rather than basic, according to the Hierarchy of Skills known as Bloom’s taxonomyS

“This raises questions about guaranteeing students does not unload the critical cognitive tasks of AI systems,” the anthropic researchers wrote. “There are legitimate concerns that AI systems can provide a crutch for students, suffocating the development of fundamental skills needed to help thinking in a higher order.”

Anthropic researchers also noticed that students were asking Claude for direct answers almost half of the time with minimal engagement back and forth. Researchers have described how even when students engage in cooperation with Claude, conversations may not help students learn more. For example, a student would ask Claude to “solve the likelihood and statistical problems with home -made explanation problems.” This can cause “multiple conversational turns between AI and the student, but it still unloads the significant thinking of AI,” the researchers wrote.

The anthropic was hesitant to say that he saw direct evidence of infidelity. Researchers wrote as an example for students who wanted direct answers to questions with multiple choices, but the anthropic could not understand if this was a home exam or a practice test. Researchers have also found examples of students who want Claude to rewrite texts to avoid finding plagiarism.

The hope is that AI can improve training through immediate feedback and customization of instructions for each student. But these studies show that AI also facilitates students no To learn.

AI defenders say that teachers need to redesign tasks so that students cannot complete them by asking AI to do it for them and train students how to use AI in ways that maximum maximum training. To me, it seems like desirable thinking. True training is difficult and if there are shortcuts, it is human nature to take them.

Elizabeth Wardell, director of the How Men’s High Achievement Center in Miami, is worried about both writing and human creativity.

“Writing is not correct or avoiding an error,” she Posted in LinkedInS “Writing is not just a product. The act of writing is a form of thinking and learning.”

Wortell warned of the long -term effects of too much reading of AI, “When people use AI for everything, they don’t think or learn,” she said. “And then what? Who will build, create and invent when we just rely on AI to do everything?

This is a warning that we all have to be careful.